DeepSeek AI Releases DeepSeekMath-V2: The Open Weights Maths Model That Scored 118/120 on Putnam 2024

How can an AI system prove complex, Olympic-level math problems in clear, natural language while also validating that its reasoning is actually correct? DeepSeek AI released Deep Sick Math-V2a large language model with open weights optimized for natural language theory that proves self-verification. The model is built on DeepSeek-V3.2-Exp-Baseworks as Parameter 685 b A mix of experts, available on Hugging Face under the Apache 2.0 license.

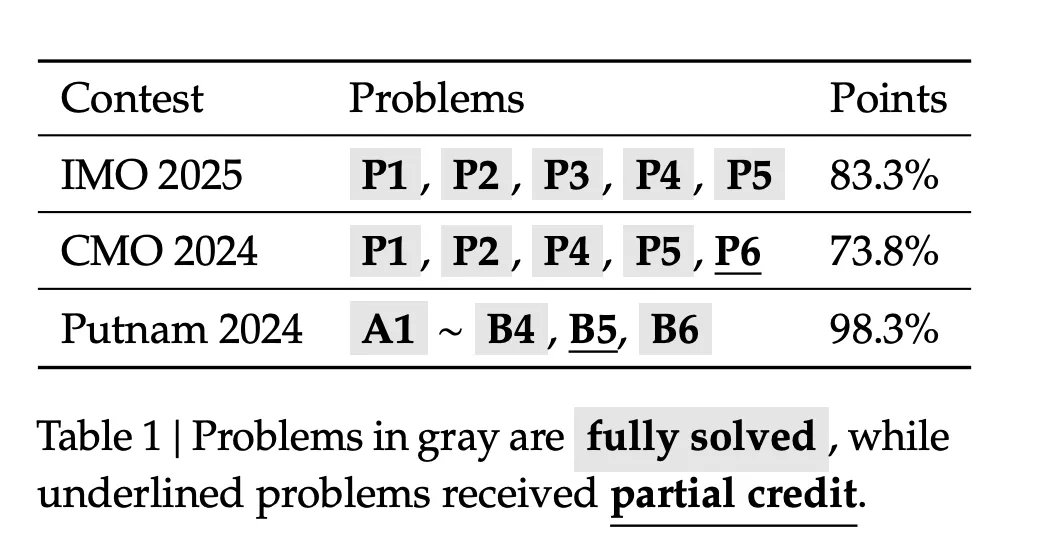

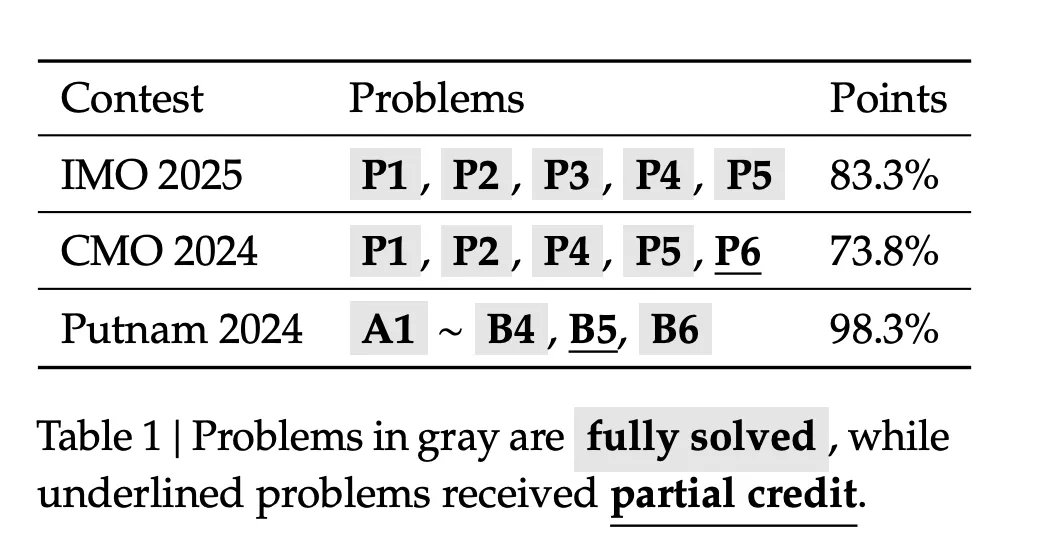

In the reviews, DeepSeekMath-V2 arrives Gold level scores in IMO 2025 and CMO 2024and achieves 118 out of 120 points at Putnam 2024 When used with a normalized test time calculation.

Why are the rewards for the final answer not enough?

State-of-the-art mathematical inference models use reinforcement learning that rewards only The final answer On standards such as AIME and HMMT. This approach pushed models from weak baselines to near saturation in short answer quizzes in about one year. (hug face)

However, the DeepSeek research team points out two structural problems:

- A correct numerical answer does not guarantee correct logic. The model may arrive at the correct number through algebraic errors that cancel out.

- Many tasks, such as Olympian proofs and theorem proofs, require a complete argument in natural language. These tasks do not have a single final numerical answer, so bonuses based on the standard answer do not apply.

Thus DeepSeekMath-V2 improves performance Quality of proof Rather than pure answer accuracy. The system evaluates whether the evidence is complete and logically sound, and uses this evaluation as the main learning signal.

Pre-production auditor training

The basic design is Auditor first. The DeepSeek research team is training an LLM-based auditor who can read the problem and candidate guide, then output natural language analysis and discrete quality scores in the set {0, 0.5, 1}.

Primary reinforcement learning data comes from The art of problem solving Contests. The search team is crawling 17.503 Problems in the method of proof From the Olympics, the team selection tests, and the post-2010 issues that explicitly require proof. These issues make up the basic set of cold start RL. Candidate proofs come from the DeepSeek-V3.2 reasoning model that is asked to iteratively improve its solutions, increasing detail but also creating many incomplete proofs. Human experts rate these proofs using criteria of 0, 0.5, 1, based on accuracy and completeness.

The auditor is trained with Group Proportional Policy Optimization (GRPO). The reward consists of two components:

- A Figure rewardwhich verifies that the verifier’s output follows a consistent template, including the analysis section and the final result in the box.

- A Score rewardwhich penalizes the absolute difference between the expected score and the expert score.

This phase produces a scorer who can grade proofs of Olympic style in a consistent manner.

Meta-validation of hallucinogenic criticism control

The validator can still manipulate the reward. He can produce the correct final result while inventing imaginary problems in the analysis. This would meet the numerical goal but would make the interpretations unreliable.

To address this matter, the research team presented A Definition verification. The definition checker reads the original problem, the evidence, and the reviewer’s analysis, and then evaluates whether the analysis is correct. It records aspects such as rephrasing steps, identifying real flaws, and consistency between the narrative and the final result.

The meta-verifier is also trained using GRPO, with its own format and point rewards. Its output, the definition quality score, is then used as an additional reward term for the primary auditor. Analyzes that indicate hallucinatory problems receive low descriptive scores, even if the final evidentiary score is correct. In experiments, this raises the average quality of analyzes evaluated around you 0.85 to 0.96 On split validation, keeping the accuracy of the proof score constant.

Self-verifying proof generator and sequential optimization

Once the verifier is robust, the DeepSeek research team trains Proof generator. The generator takes the problem and outputs the solution and solution Self-analysis Which follows the same verification address.

The generator bonus combines three signals:

- The auditor’s score on the evidence created.

- Agreement between self-reported score and validation score.

- Meta-validation score for self-analysis.

Officially, the main reward uses weights α = 0.76 To obtain the degree of proof and β = 0.24 For the self-analysis component, multiplied by the coordination term imposes the structure of the output. This prompts the generator to write proofs that the verifier accepts, and to be honest about the remaining issues. If he claims that the flawed evidence is perfect, he loses the reward through disagreement and low descriptive results.

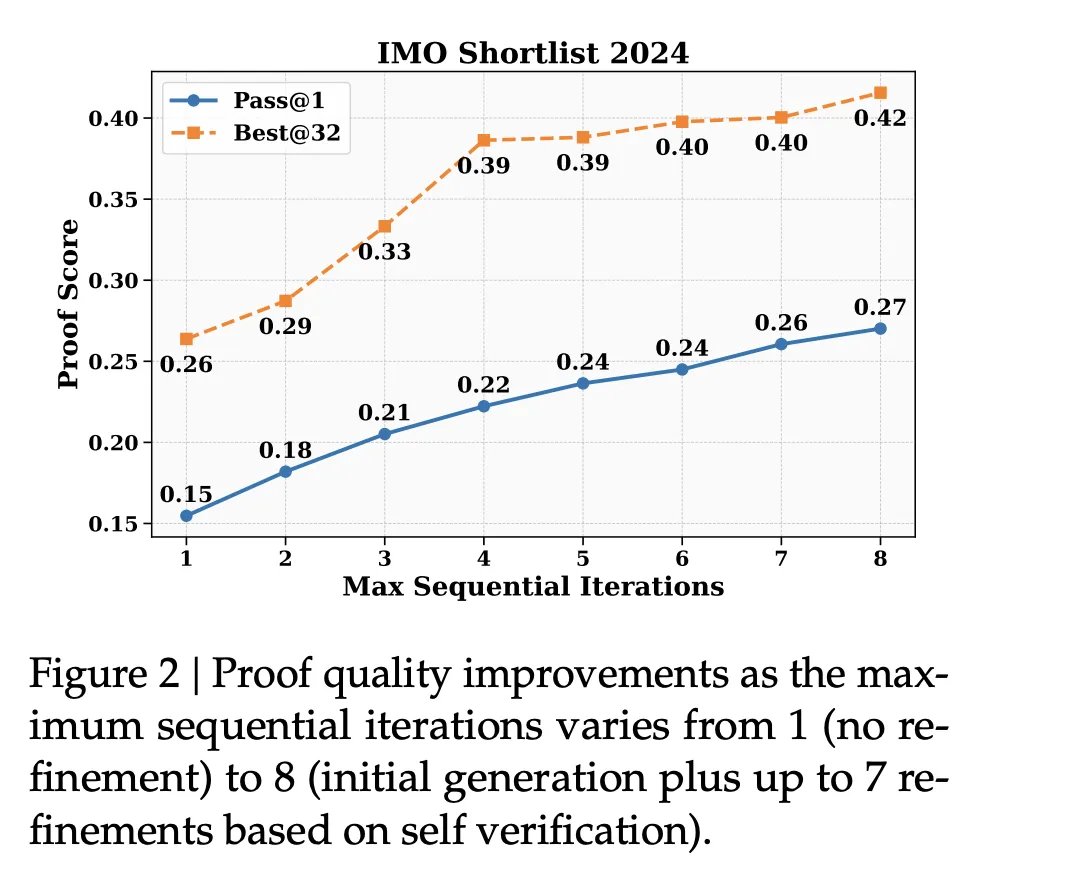

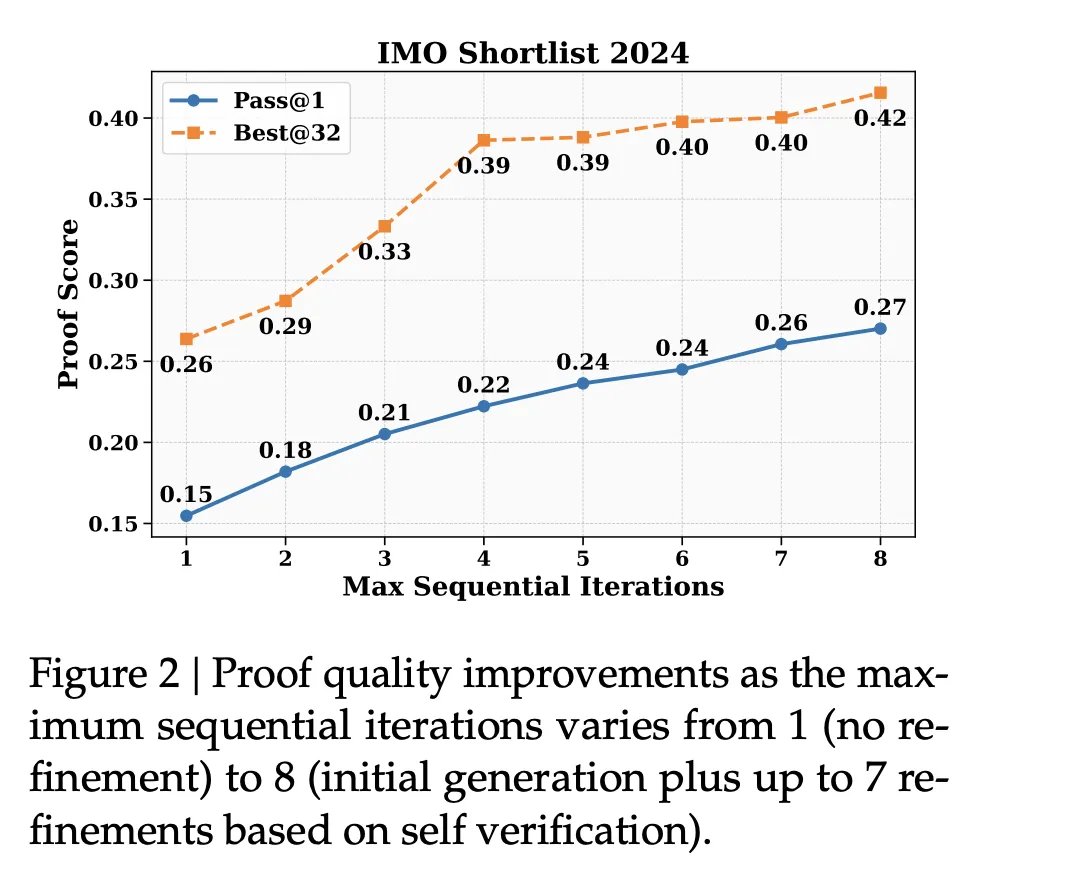

DeepSeek also exploits The maximum token context is 128K From the basic model. For difficult problems, the generator often cannot fix all the problems in one pass, because duplicate evidence plus analysis will override the context. In this case, the system is turned on Sequential improvement. It generates evidence and self-analysis, feeds them as context, and tells the model to produce new evidence that fixes previously discovered problems. This loop can be repeated multiple times, depending on the context budget.

Automatic measurement and marking verification

As the generator improves, it produces harder proofs, which are expensive to grade by hand. To keep the training data up to date, the research team offers a method Automatic labeling pipeline Based on metric verification.

For each candidate piece of evidence, the system tests several independent validation analyses, and then evaluates each analysis using a meta-validation tool. If multiple high-quality analyzes converge on the same serious issues, the evidence will be classified as invalid. If no valid issues pass the definition check, the proof is classified as valid. In final training iterations, this path replaces human labels, with spot checks confirming good agreement with experts.

Competition and benchmark results

The research team evaluated DeepSeekMath-V2 on several fronts:

On an internal set of 91 CNML level problems Covering algebra, geometry, number theory, combinatorics, and inequalities, DeepSeekMath-V2 is shown to achieve the highest average proof scores among Gemini 2.5 Pro, GPT 5 Higher Thinkingand DeepSeekMath-V2 in every category, as measured by their validator.

on IMO 2024 Shortlist,Sequential optimization with self-validation improves both the success on 1 and 32 best quality metrics while increasing the maximum number of optimization iterations.

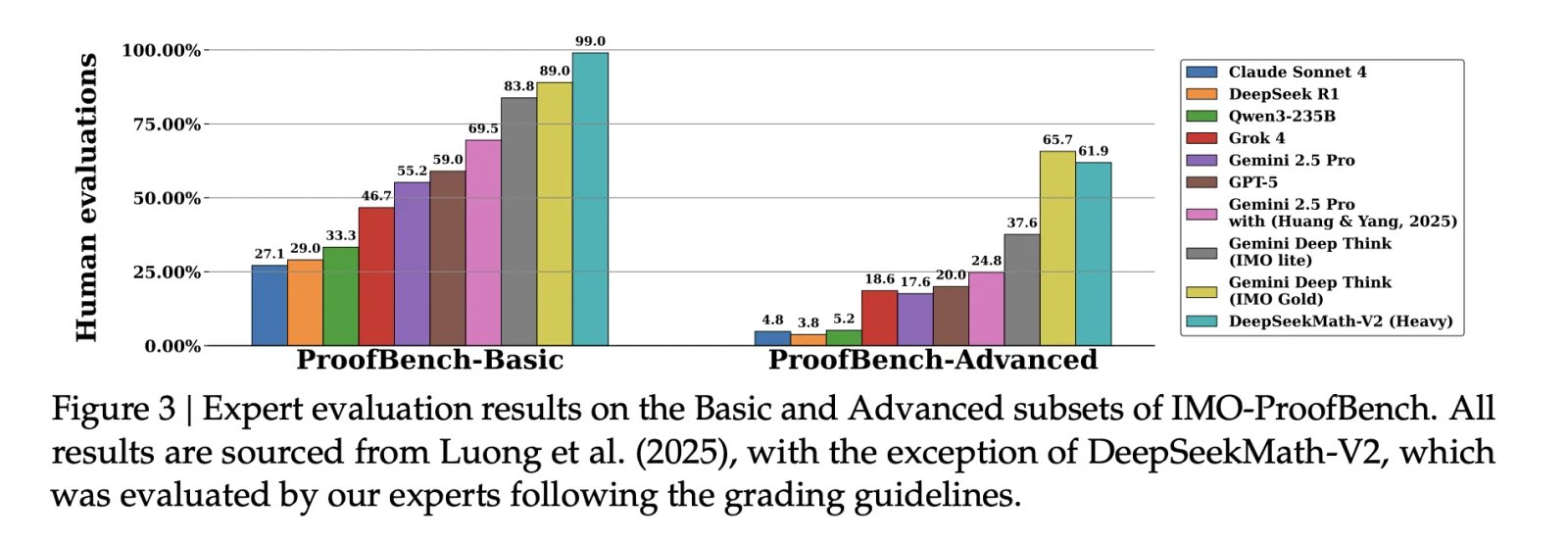

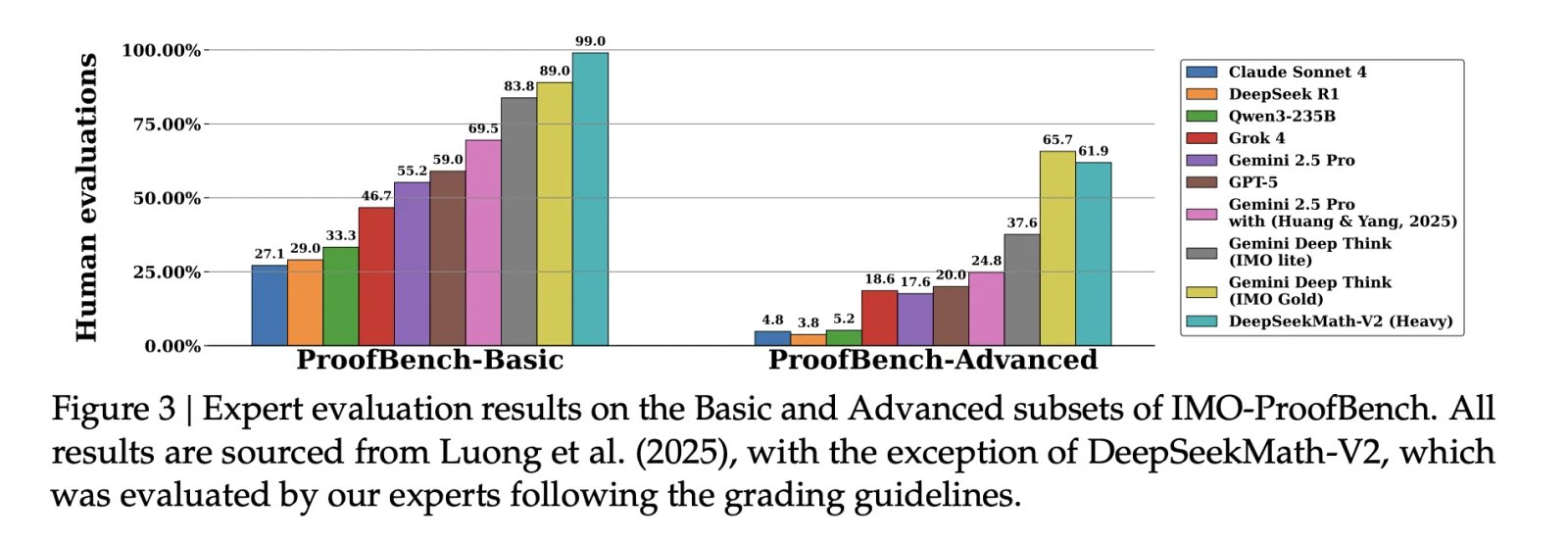

on IMO ProofBench,Expert Evaluation The above figure shows that DeepSeekMath-V2 outperforms Deep Mind Deep Think Emo Gold In the basic subgroup and remains competitive in the advanced subgroup, while clearly outperforming other large models.

For full competitions, reports:

- IMO 2025: 5 of 6 problems solved, Gold Medal level.

- Marketing Director 2024: 4 fully solved problems plus partial credit for one other problem, at the gold medal level.

- Putnam 2024: 11 of 12 issues have been fully resolved and the remaining issue has minor bugs 118 out of 120 pointshigher than the best human score of 90.

Key takeaways

- DeepSeekMath V2 is a 685B parameter model built on the DeepSeek V3.2 Exp Base, designed for self-verifying natural language theory, and released as open weights under the Apache 2.0 License.

- The main innovation is the first line of auditor training with a GRPO-trained auditor and a meta-checker who records proofs of accuracy, not just final answers, which directly addresses the gap between correct answers and correct reasoning.

- The proof generator is then trained on this validator and the meta-checker, using rewards that combine proof quality, agreement with self-assessment, and fidelity of analysis, as well as sequential improvement within a 128KB context to iteratively fix proofs.

- Accounting for testing time and large verification budgets, DeepSeekMath V2 reaches the Gold performance level at IMO 2025 and CMO 2024 and scores 118 out of 120 at Putnam 2024, beating the best human score that year.

Editorial notes

DeepSeekMath-V2 is an important step towards self-verifiable mathematical reasoning, as it directly addresses the gap between final correct answers and correct reasoning, using a validator, declarative verification and proof generator trained with GRPO on the Olympiad style guides and published on the 685B scale to reach Gold level performance at IMO 2025 and CMO 2024 and 118 out of a ~120 score at Putnam 2024. Overall, this release demonstrates that verifiable mathematical reasoning can Subjectively using open weights is now practically achievable for competition level problems.

verify Full paper,Model of weights at high frequency and Repo. Feel free to check out our website GitHub page for tutorials, codes, and notebooks. Also, feel free to follow us on twitter Don’t forget to join us 100k+ mil SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.

Asif Razzaq is CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of AI for social good. His most recent endeavor is the launch of the AI media platform, Marktechpost, which features in-depth coverage of machine learning and deep learning news that is technically sound and easy to understand by a broad audience. The platform has more than 2 million views per month, which shows its popularity among the masses.

🙌 FOLLOW MARKTECHPOST: Add us as a favorite source on Google.

Don’t miss more hot News like this! Click here to discover the latest in AI news!

2025-11-28 09:35:00