Meta AI Introduces DeepConf: First AI Method to Achieve 99.9% on AIME 2025 with Open-Source Models Using GPT-OSS-120B

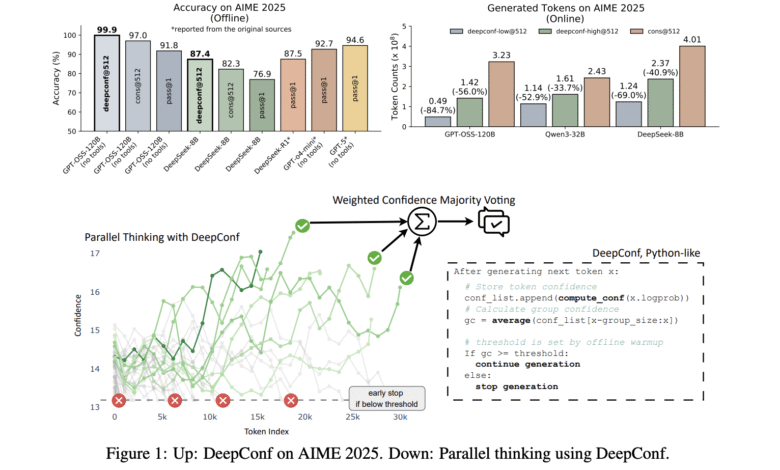

LLMS reinstated thinking about artificial intelligence, with parallel thinking and methods of self -compatibility that are often martyred as pivotal developments. However, these technologies face a basic comparison: taking multiple thinking samples enhances accuracy, but at a highly slope mathematical cost. A team of researchers from Meta AI and UCSD Deep Think (Deepconf)The new artificial intelligence approach almost removes this comparison. Deepconf delivers Modern thinking performance with dramatic efficiency gainsFor example, for example, 99.9 % accuracy In the arduous AIME 2025 Mathematics Competition using GPT -SS-120B open source, while it requires up to this 85 % less than generated symbols One of the traditional parallel thinking approach.

voting">Why Deepconf?

Parallel thinking (self -communication with majority vote) is the actual criterion for promoting thinking in LLM: creating multiple candidate solutions, then choose the most common answer. While this method is her Revenue-Plastic or even declines where samples are taken from paths, because low -quality thinking effects can reduce voting. Moreover, the generation of hundreds or thousands of effects for each costly query, in time and calculating.

Deepconf addresses these challenges Exploiting the confidence signs of LLM. Instead of dealing with all the effects of thinking equally During the generation (Online) or after that (Uncomfortable) – Using the most reliable tracks only to inform the final answer. This strategy BoneIt requires There is no training or exhaustAnd it can be connected to any existing model or framework with minimal changes in code.

How Deepconf works: Confidence as a guide

Deepconf offers many developments in how to measure and use confidence:

- Symbolic confidence: For each symbol created, calculate the average negative record of the big candidates. This gives a local scale for certainty.

- Group trust: The average symbolic confidence on a sliding window (for example, 2048 distinctive symbols), provides medium and medium signal of thinking quality.

- Tail confidence: Focus on the final part of the logic tracking, where the answer is often, to capture late breakdowns.

- The slightest collective confidence: Determine the less confident part of the tracking, which often indicates the collapse of logic.

- Lower percentage confidence: Highlighting the worst parts, which are the most predictive of errors.

Then these scales are used Voices of weight (The effects of high confidence are calculated more) or to Filter effects (Only the highest traces of confidence are kept. in Online modeDeepconf stops generating an effect as soon as its confidence has decreased to the bottom of a dynamic calibration threshold, which greatly reduces the lost account.

Main results: performance and efficiency

Deepconf was evaluated via multiple thinking standards (AIME 2024/2025, HMMT 2025, Brumo25, GPQA-Diamond) and models (Deepseek-8B, QWEN3-8B/32B, GPT -SS-20B/120B). Speaking results:

| model | Data set | Passing@1 ACC | Negatives@512 ACC | Deepconf@512 ACC | Reserved symbols |

|---|---|---|---|---|---|

| GPT -SS-120B | Aime 2025 | 91.8 % | 97.0 % | 99.9 % | -84.7 % |

| Deepseek-8B | Aime 2024 | 83.0 % | 86.7 % | 93.3 % | 77.9 % |

| QWEN3-32B | Aime 2024 | 80.6 % | 85.3 % | 90.8 % | -56.0 % |

Performing payment: Through models and data groups, Deepconf improves accuracy through up to ~ 10 percentage points On the standard majority, it often satisfies the upper limit of the standard.

Very effective: By stopping early on the effects of low confidence, Deepconf reduces the total number of symbols created by 43-85 %With no loss (and often earns) in the final accuracy.

Delivery and operation: Deepconf works outside the box with any model-no accurate refinement, there is no select, nor changes on the infrastructure. You can drop it in the current serving staple (for example, vlm) with ~ 50 code line.

Easy to publish: This method is implemented as a lightweight supplement for the current inference engines, which only requires access to records at the distinctive symbol level and some logic lines to calculate confidence and early stopping.

Simple integration: the minimum code, the maximum effect

Deepconf app is very simple. For VLLM, the changes are minimal:

- Extension of LogProbs processor To track confidence in the wind.

- Add an early check Before each exit is emitted.

- Passing the thresholds of confidence Via API, with no model recreation.

This allows any end -complied point with Openai Support Deepconf with one additional preparation, making it trivial adopting in production environments.

conclusion

Meta Ai’s Deepconf represents A. A leap forward In the logic of LLM, both the accuracy of the peak and the unprecedented efficiency are offered. By dynamically benefiting from the internal confidence of the model, Deepconf achieves what is far from the reach of open source models: Almost ideal results for elite thinking tasks, with a small part of the calculation cost.

Common questions

Common Questions 1: How does Deepconf improve accuracy and efficiency compared to the majority vote?

Deepconf’s filtration and vote, which realizes confidence, gives the priority of the effects of higher typical certainty, which enhances accuracy by up to 10 degrees Celsius via thinking criteria compared to the majority vote alone. At the same time, it is early in the effects of low confidence.

Common Questions 2: Is it possible to use Deepconf with any language model or framework?

Yes. Deepconf is fully models and can be combined in any service accommodation-including open models and commercial source-without modification or re-training. Publishing requires only less changes (about 50 lines of code for VLM), and take advantage of the distinctive symbol records to calculate confidence and deal with early stopping.

Common Questions 2: Does Deepconf require re -training, private data or complex control?

no. Deepconf works entirely at the time of reasoning, and does not require any additional training for the model, installation processes, or high searches. Only the embedded LogProb outputs use it and immediately works with the standard API settings of the leading frameworks; It is able to develop, powerful and publishing on real work burdens without interruption.

verify Paper and project page. Do not hesitate to check our GitHub page for lessons, symbols and notebooks. Also, do not hesitate to follow us twitter And do not forget to join 100K+ ML Subreddit And subscribe to Our newsletter.

Asif Razzaq is the CEO of Marktechpost Media Inc .. As a pioneer and vision engineer, ASIF is committed to harnessing the potential of artificial intelligence for social goodness. His last endeavor is to launch the artificial intelligence platform, Marktechpost, which highlights its in -depth coverage of machine learning and deep learning news, which is technically sound and can be easily understood by a wide audience. The platform is proud of more than 2 million monthly views, which shows its popularity among the masses.

Don’t miss more hot News like this! Click here to discover the latest in AI news!

2025-08-27 16:40:00