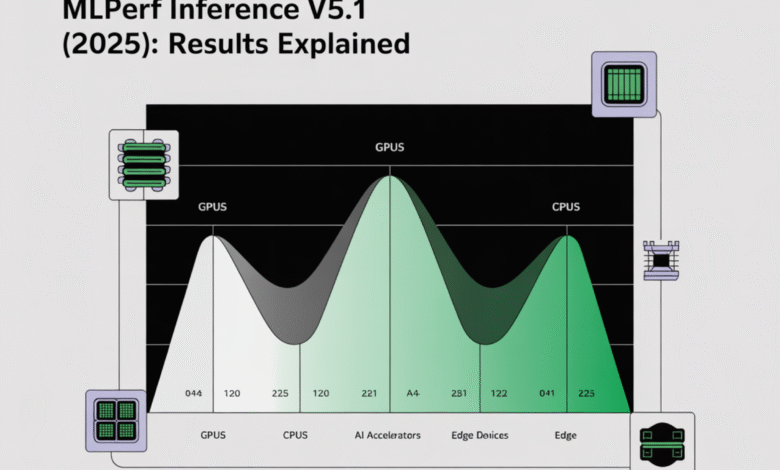

MLPerf Inference v5.1 (2025): Results Explained for GPUs, CPUs, and AI Accelerators

What actually measures mlperf?

Mlperf Inference quantities How quickly is a complete system (Devices + Operating Time + Submission) is executed Fixed and pre -trained models Under strict cumin and accuracy Restrictions. The results were reported to Data center and edge The wings that contain uniform request patterns (“scenarios”) created by Loadgen, ensuring architectural neutrality and reproduction. the closed The partition fixes the form and pre -processing to make apple comparisons into the breaks; the Open The partition allows model changes that cannot be fully compared. Signs of availability –availableand Inspectionand RDI (Research/Development/Interior) – Determine whether the formations are shipping or experimental.

Update 2025 (V5.0 → V5.1): What has changed?

the V5.1 Results (published September 9, 2025) Add three modern work burdens and expand interactive presentation:

- Deepsek-R1 (The Standard of First Thinking)

- Llama-3.1-8B (Summary) GPT-J replacement

- Big hydration v3 (ASR)

This tour was registered 27 introduction And manifestations for the first time AMD Mi355X instinctand Intel Arc Pro B60 48GB Turboand Nvidia GB300and RTX 4000 ADA-PCIE-20GBAnd RTX Pro 6000 Blackwell Server Edition. Interactive scenarios (narrow TTFT/Tpot The border has been expanded) beyond a single model to pick up the burdens of the work of an agent/chat.

🚨 [Recommended Read] VIPE (Video Engine): A powerful and multi -dimensional 3D video explanation tool

Scenarians: The four patterns that you must set for real work burdens

- In connection: Great productivity, no Cumin is binding – dominating the fall and schedule.

- Server: The arrivals Puison with P. 99 Cloise boundaries for chat/rear agent.

- One / multi -dress (Emphasis on the edge): The time of the strict tail of each character; The multi -pin stimulates synchronization at fixed intervals between the confrontation.

Each scenario has a specific scale (for example, Max Puyson Productivity For server; productivity To connect to the two).

Llms: TTFT and Tpot are now first -class

LLM test report TTFT (Time for the first time) and Tpot (Time as an output). V5.0 provided more strict interactive limits to Lama 2-70B ((P99 TTFT 450 mm, Tpot 40 mm seconds) To reflect the user’s response. the Long-hostext llama-3.1-405b Maintains higher limits (P99 TTFT 6 S, Tpot 175 MS) Because of the size of the model and the length of the context. These restrictions are carried in V5.1 along with the New LLM tasks and thinking tasks.

V5.1 key entries Quality/cumin Gates (ABBREV.):

- Llm Q & A-Lama-2-70B (OpenONCA): Conversation 2000 mm/200 mm; Interactive 450 mm/40 mm; 99 % and 99.9 % accuracy goals.

- LLM Summ-Llama-3.1-8B (CNN/Dailymail): 2000 millimeters/100 mm conversation; Interactive 500 mm/30 mm.

- Thinking-Deepseek-R1: TTFT 2000 MS / TPOT 80 MS; 99 % of FP16 (the foundation line in the fine match).

- ASR – Big V3 whispered (Librispeech): Wer (datacenter + Edge) quality.

- Llama-3.1-405B: TTFT 6000 mm, TPOT 175 mm seconds.

- Image – SDXL 1.0Fid/clip domains; Server He has a 20 second registration.

Legacy CV/NLP (Resnet-50, Retinanet, BERT-L, DLRM, 3D-in) remains for continuity.

Energy Results: How to Read Energy Claims

Mlperf power (Optional) Reports Wall system Energy for the same operation (server/incompatible: System energy; One/multi -fencing: Energy for each current). only Measured Operations are suitable for energy efficiency comparisons; TDPS estimates and sellers outside their scope. V5.1 includes energy and edge, but the wider participation is encouraged.

How to read the tables without deceiving yourself?

- A closed compared to a closed only; Open operations may use models/estimate.

- Match accuracy goals (99 % compared to 99.9 %) – Productivity often decreases with strict quality.

- NormalizationMlperf reports System level Productivity under restrictions; Division of the counting counters derivative “Every chip” that Mlperf does no Definition as a preliminary scale. Use it only for rational tests, not marketing claims.

- Filter by availability (He prefers availableIt includes power The columns are concerned with efficiency.

Interpretation of 2025 Results: graphics processing units, central processing units, and others

Graphics processing units (on a rack to one knot). The new silicone appears prominently in the interactive server (TTFT/TPOT) and in long-context work burdens where the efficiency of scheduling and KV-Catch are like raw stirring. Systems on the rack (for example, GB300 NVL72) publishes the total total; Normalized by both accelerator and Host Before comparison with one knot inputs, keeping the scenario/accuracy identical.

CPU (independent foundation lines + host effects). CPU’s entries remain only useful lines and highlight pre -processing and transmission that can accelerate the bottleneck speed in Server situation. new Xeon 6 Results and the CPU+Mixed CPU appears in V5.1; Check the hosting of the host and composition of memory when comparing the systems with similar accelerators.

Alternative accelerators. V5.1 increases architectural diversity (graphics processing units from multiple sellers as well as a new workstation/server). When open division transmitters appear (for example, shared/low -resolution variables), verifying that any comparison between the cross system is fixed to divideand modeland Data setand scenarioAnd accuracy.

Practical play book (map standards to SLAS)

- Interactive chat/agents → Interactive server on Lama 2-70B/ /Llama-3.1-8B/ /Deepsek-R1 (To continue the match and accuracy; audit P99 TTFT/Tpot).

- Batch Summarization/ETL → In connection on Llama-3.1-8B; Productivity for each shelf is the cost driver.

- ASR front end → Whisper v3 Server with Lostency borders. Display the frequency range of memory and vocal materials before/post -processing.

- Long context analyzes → Llama-3.1-405B; Evaluate if your UX tolerates 6 s ttft / 175 ms tpot.

What is the 2025 course signals?

- The interactive LLM service is the risk of table. TTFT/TPOT narrow in V5.X makes scheduling, assembly, excessive attention, and management of a kilo-visible results-Expective leaders different leaders from the Internet.

- Logic is now analogy. Deepsek-R1 stresses control flow and memory movement differently from the next generation.

- Cover a wider method. Whisper v3 and SDXL tubes pipelines that exceed the decoding of the distinctive symbol, clarifying the limits of input/directing and displaying the reinforcement.

summary

In short, Mlperf Interference v5.1 makes the inference comparisons can be implemented only when they focus on the indicator rules: compatibility on closed Division, match scenario and accuracy (Including llm TTFT/Tpot Interactive service limits), preferably available Systems with measurement power For efficiency; Deal with any splits for all devices, such as derivative inference, because MLPERF performance reports at the system level. 2025 Covering Course with Deepsek-R1and Llama-3.1-8BAnd Big hydration v3In addition to the wider silicone sharing, purchases must liquidate the results to the work burdens that reflect SLAS-Server-interaction for chat/agents, and the internet for payment-and check the validity of the claims directly in the pages of MLCOCOMONS results and energy methodology.

References:

Michal Susttter is a data science specialist with a master’s degree in Data Science from the University of Badova. With a solid foundation in statistical analysis, automatic learning, and data engineering, Michal is superior to converting complex data groups into implementable visions.

🔥[Recommended Read] Nvidia AI Open-Sources VIPE (Video Forms)

Don’t miss more hot News like this! Click here to discover the latest in AI news!

2025-10-01 09:38:00